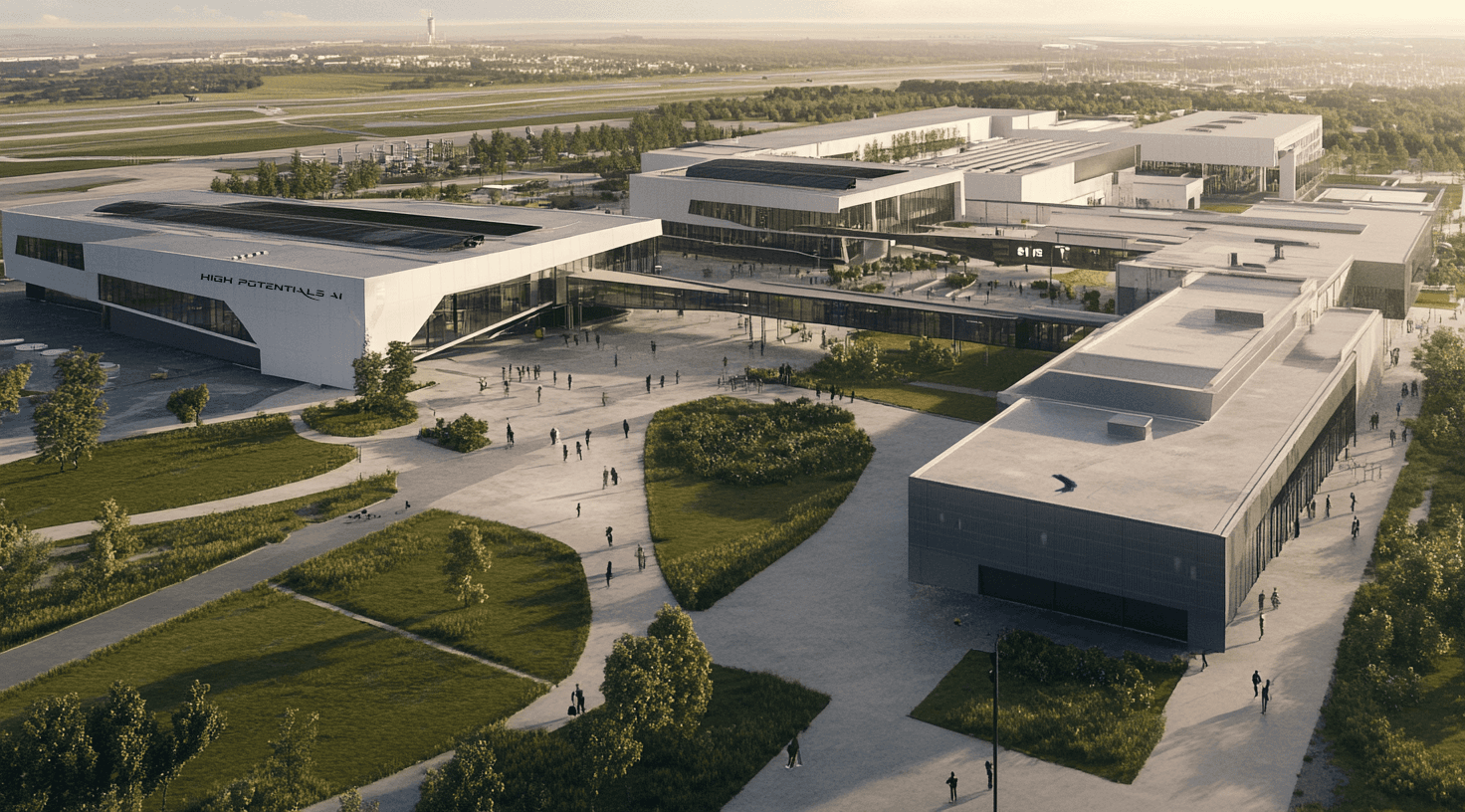

Our Gigafactory of Compute

We’re running the world’s biggest supercomputer, Colossus. Built in 122 days—outpacing every estimate—it was the most powerful AI training system yet. Then we doubled it in 92 days to 200k GPUs. This is just the beginning.

Number of GPUs

200K GPUs

Total Memory Bandwidth

194 GPUs

Network Bandwidth

3.6 GPUs

Storage Capacity

>1 GPUs

Latest news

0500K

0500K

05K

Cost

$0.13